Collaborative robotics has now been a reality for about ten years and finds space in various applications ranging from the loading of machine tools to quality control, passing through end-of-line packaging.

The ease of installation and the rapid return on investment have represented the main growth drivers of this technology which is carving out an increasing role in the wider robotics sector.

According to the Statistics Department of the International Federation of Robotics (IFR), collaborative robotics installations represent a constantly growing market share, from 2.8% in 2017 to 4.8% in 2019. This occurs mainly in new markets and for new applications and minimally subtracting quotas from more traditional robotics.

The very first installations of collaborative robotic systems were designed to relieve completely humans from demanding and dangerous tasks. From the simple manipulation of objects to the most recent use in welding, the mostly exploited capabilities were their ease of use, programming and reprogramming. The collaborative nature is perceived as mostly valuable during installation and while teaching new tasks.

This substitution paradigm will be short-lived. In fact, according to research by McKinsey & Company, less than 5% of tasks are fully automatable, at least with current technology, while more than 40% are fully automatable for at least 50%. It follows that we will have to expect an increase number of truly collaborative applications, with robots working side-by-side with human workers.

Will it be just a matter of time? The one between first installations and the widespread diffusion? Or, is it a matter of technology? Probably both. In fact, it is not surprising that an analysis carried out by Boston Consulting finds that more than 90% of the companies interviewed are not yet able to take full advantage of next-generation robotics.

However, one thing is certain: looking to the future means preparing to face the most complicated challenges, that is, those applications (definitely in higher numbers, as we have seen) in which humans cannot be completely replaced by automation, but one will have to necessarily make sure that the two natures, the human and the artificial ones, coexist and are functional to each other.

And this is probably where research and innovation efforts should be concentrated. The robots are equipped with sophisticated techniques to ensure the safety of the operators, of course, and the applications are always certified according to standards. But safety is not the only enabling factor, albeit necessary, for the collaboration between humans and robots.

In terms of collaboration, strictly speaking, something more is expected, going beyond mere co-existence, even sometimes occasional. Collaborating, from the late Latin collabōrare which means working together, is a relatively simple and natural activity for two persons. Perhaps it is also as such between two robots. Between humans and robots, however, the levels of effectiveness and reliability are still not satisfactory. How come? Combining two components that are so different from each other, who speak different languages, is certainly not a walk in the park.

People do not communicate with each other only in natural language, they do so in many other ways and not necessarily verbally. Gestures, body language, expressiveness, are all methods of communication that can be easily interpreted by a person, but difficult to understand by a machine.

First of all we are talking about robots that have very limited sensory abilities. Often they are not equipped with vision systems, if not occasionally, and certainly not to observe the “human colleague”.

Observing and understanding, relating spatially and temporally the elements that make up the scene, for example the workspace, are activities that we do naturally, without even realizing it. But how could a robot do it?

From the point of view of the “sense organs” it is easy to say. We have now refined the technologies for artificial vision, managing to obtain several megabytes (just think that a smartphone has a camera of at least 12 megapixels) from a sensor of a few square centimetres. Indeed, resolution of the images is not the actual problem, nor is their level of detail. To date, the weak point is the ability to distinguish and relate the different elements of a scene.

Cognitive vision, that is the set of techniques ranging from image analysis (computer vision) to machine learning, will probably constitute the keystone for a further development of collaborative robotics. Sensors and cameras, together with sophisticated algorithms, will allow robots to understand the context in which they operate, and in which their “human colleagues” also operate. They will be able to share their workspace with humans, being ready to take-over, when needed, supporting the humans, instead of completely replacing them.

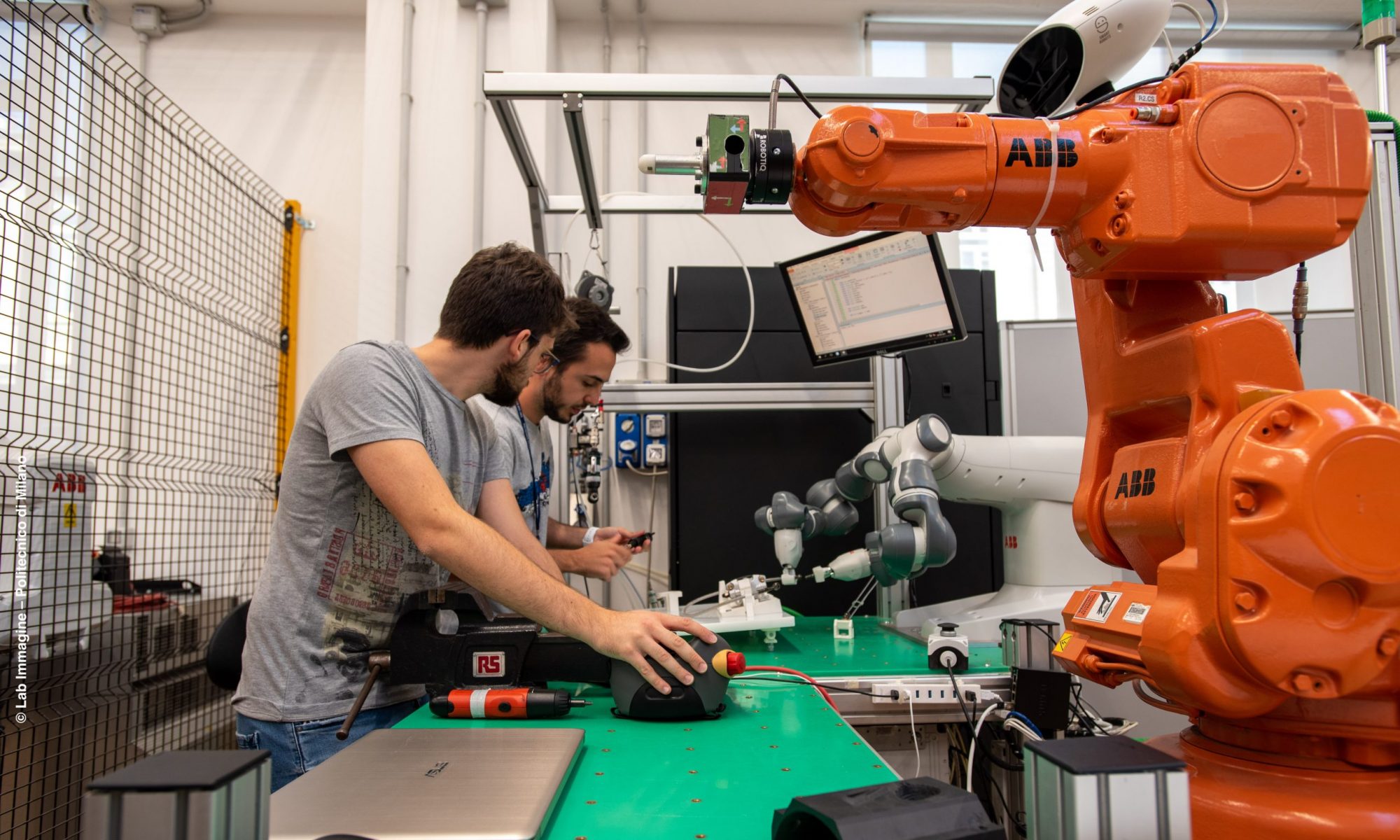

In our lab at Politecnico di Milano, we are seriously tackling this problem. We aim at combining computer vision, object detection, and artificial reasoning to facilitate the collaboration between humans and robots in manufacturing assembly tasks.

The video shows a human-robot collaborative assembly application for automotive components, namely the rear braking system of a motorbike. The worker is responsible for the assembly of the oil tank and for securing it to the pump, while a collaborative robot, the ABB YuMi, has to perform the pre-assembly of the oil tube connection from the caliper to the pump. The operator is finally responsible for the tightening of three screws, two of them using a pneumatic ratchet, the other using an electric screwdriver.

Object detection and human tracking are combined to understand the ongoing activity of the human operator, and to synchronise the robot accordingly.

References:

- Fraunhofer InstItute for IndustrIal engIneerIng (IAO), “Lightweight robots in manual assembly – best to start simply!”, 2016

- McKinsey Global Institute, “A future that works: automation, employment, and productivity”, 2017

- Boston Consulting Group, “Advanced Robotics in the Factory of the Future”, 2019

- N. Lucci, A. Monguzzi, A.M. Zanchettin, P. Rocco – “Workflow modelling for human-robot collaborative assembly operations”, Robotics and Computer-Integrated Manufacturing, 2022.